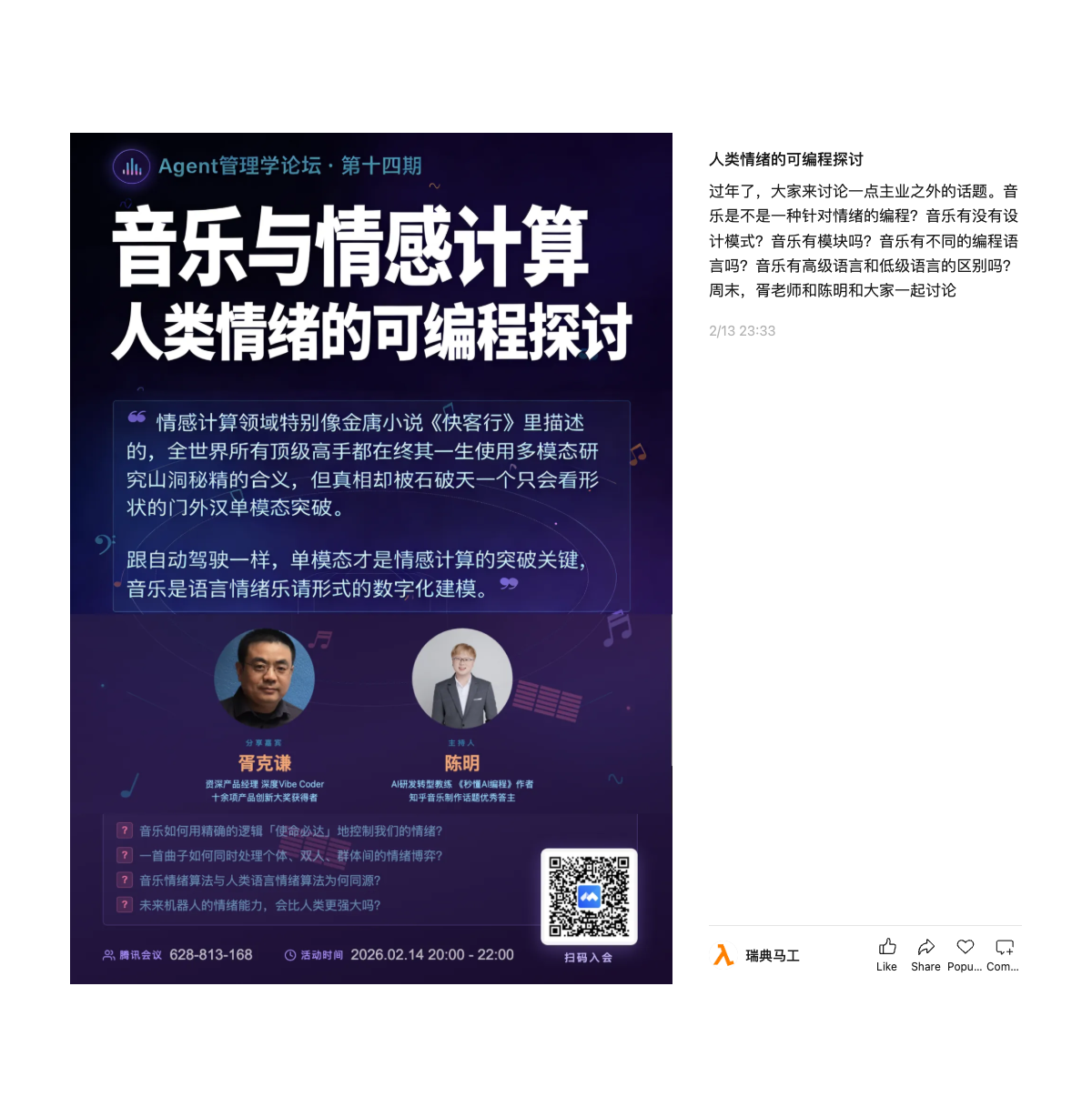

Music and Affective Computing: Programming Human Emotions

Agent Management Forum — Episode 14

Music and Affective Computing: Programming Human Emotions

It's the Lunar New Year holiday, so let's discuss something outside our day jobs. Is music a form of programming — targeting emotions? Does music have design patterns? Does it have modules? Does music have different programming languages? Is there a distinction between high-level and low-level languages in music?

The Thesis

Affective computing is a lot like the cave scene in Jin Yong's martial arts novel "Ode to Gallantry" — every world-class expert spends their entire life using multimodal approaches to study the mysterious inscriptions in the cave, but the truth is cracked by Shi Potian, an outsider who can only recognise shapes, through a single-modality breakthrough.

Just like autonomous driving, single-modality is the key to breaking through in affective computing. Music is the digital modelling of language-based emotional expression in musical form.

Speakers

Guest speaker: Xu Keqian — Senior product manager, deep Vibe Coder, winner of over ten product innovation awards.

Moderator: Chen Ming — AI R&D transformation coach, author of "Instantly Understand AI Programming", top answerer on Zhihu's music production topic.

Discussion Questions

- How does music use precise logic to control our emotions with "mission-critical" reliability?

- How does a single piece of music simultaneously handle emotional dynamics between individuals, pairs, and groups?

- Why do musical emotion algorithms and human language emotion algorithms share the same origins?

- Will robots' emotional capabilities surpass those of humans in the future?

Originally written in Chinese. Translated by the author.